Table of Contents

0:00 Recap

0:40 A simple example (more in the practicals)

3:44 Pytorch tensor: requires_grad field

6:44 Pytorch backward function

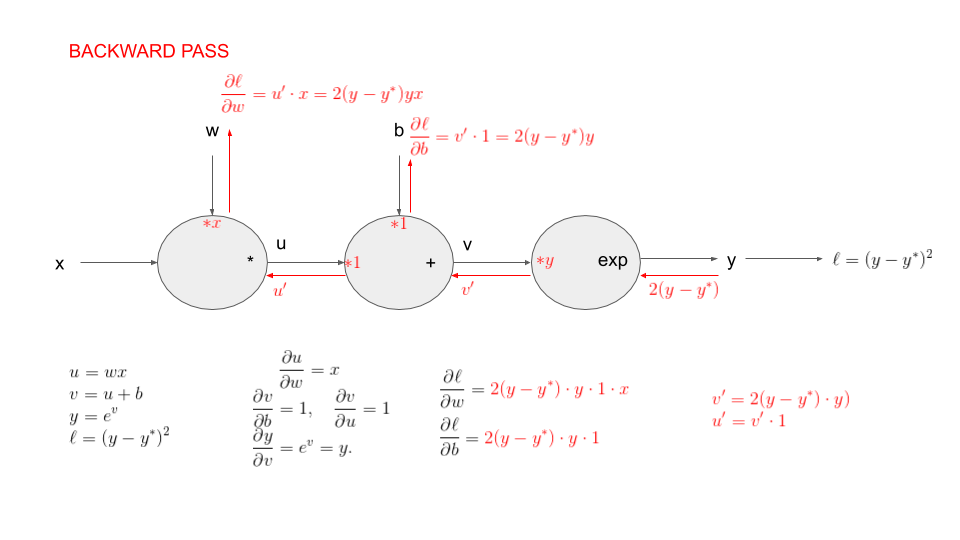

9:05 The chain rule on our example

16:00 Linear regression

18:00 Gradient descent with numpy...

27:30 ... with pytorch tensors

31:30 Using autograd

34:35 Using a neural network (linear layer)

39:50 Using a pytorch optimizer

44:00 algorithm: how automatic differentiation works

Automatic differentiation: a simple example static notebook, code (GitHub) in colab

notebook used in the video for the linear regression. If you want to open it in colab

backprop slide (used for the practical below)

To check your understanding of automatic differentiation, you can do the quizzes

practicals in colab Coding backprop.

Adapt your code to solve the following challenge:

Some small modifications:

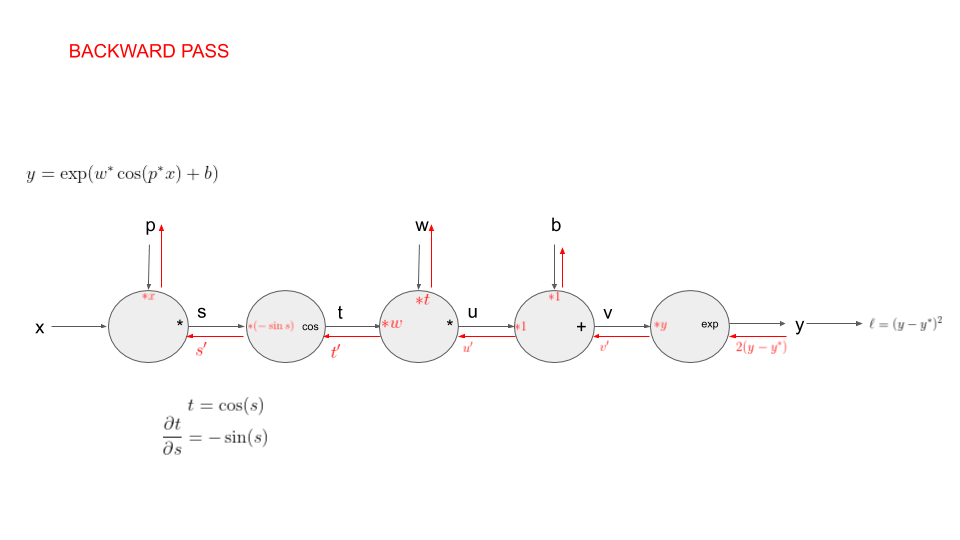

First modification: we now generate points where , i.e is obtained by applying a deterministic function to with parameters and . Our goal is still to recover the parameters and from the observations .

Second modification: we now generate points where , i.e is obtained by applying a deterministic function to with parameters , and . Our goal is still to recover the parameters from the observations .