Table of Contents

The first attention mechanism was proposed in Neural Machine Translation by Jointly Learning to Align and Translate by Dzmitry Bahdanau, Kyunghyun Cho, Yoshua Bengio (presented at ICLR 2015).

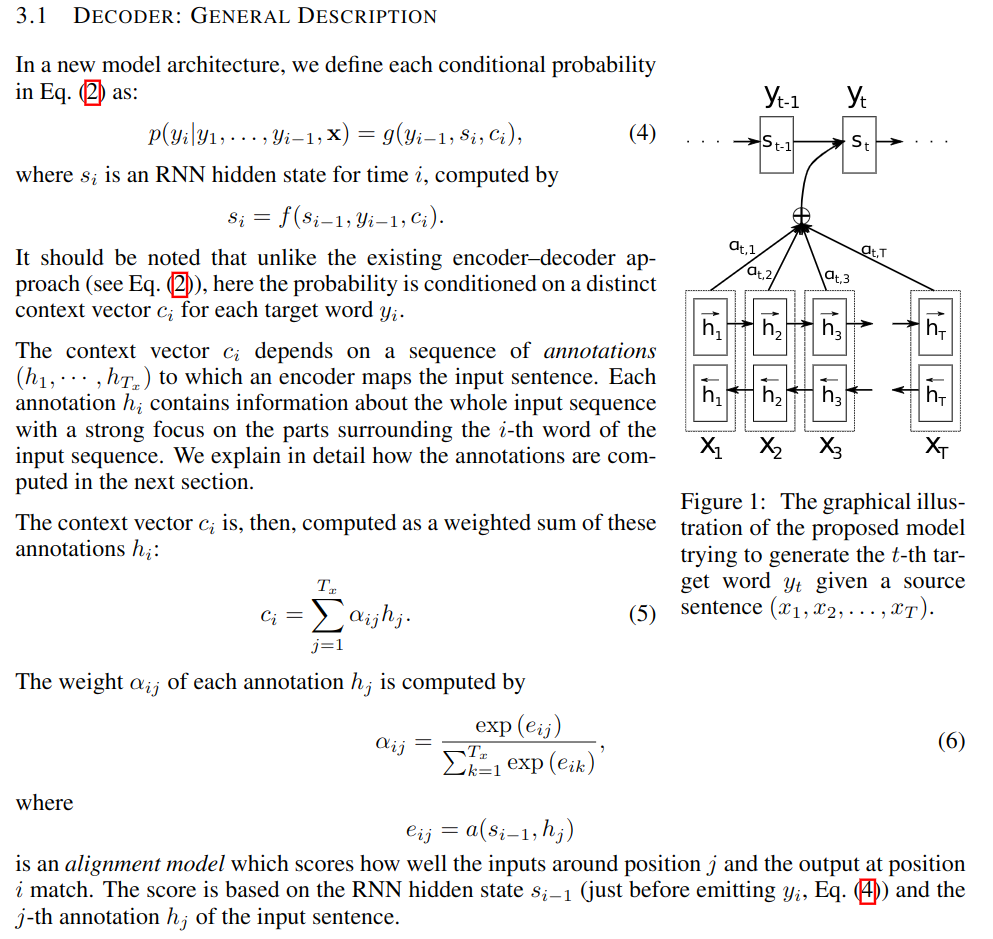

The task considered is English-to-French translation and the attention mechanism is proposed to extend a seq2seq architecture by adding a context vector in the RNN decoder so that, the hidden states for the decoder are computed recursively as where is the previously predicted token and predictions are made in a probabilist manner as where and are the current hidden state and context of the decoder.

Now the main novelty is the introduction of the context which is a weighted average of all the hidden states of the encoder: where is the length of the input sequence, are the corresponding hidden states of the decoder and . Hence the context allows passing direct information from the 'relevant' part of the input to the decoder. The coefficients are computed from the current hidden state of the decoder and all the hidden states from the encoder as explained below (taken from the original paper):

In Attention for seq2seq, you can play with a simple model and code the attention mechanism proposed in the paper. For the alignment network (used to define the coefficient ), we take a MLP with activations.

You will learn about seq2seq, teacher-forcing for RNNs and build the attention mechanism. To simplify things, we do not deal with batches (see Batches with sequences in Pytorch for more on that). The solution for this practical is provided in Attention for seq2seq- solution

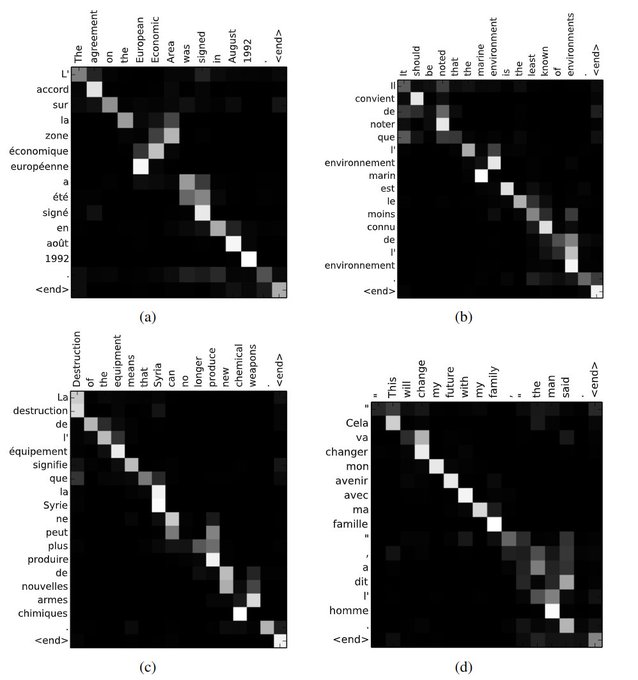

Note that each is a real number so that we can display the matrix of 's where ranges over the input tokens and over the output tokens, see below (taken from the paper):

We now describe the attention mechanism proposed in Attention Is All You Need by Vaswani et al. First, we recall basic notions from retrieval systems: query/key/value illustrated by an example: search for videos on Youtube. In this example, the query is the text in the search bar, the key is the metadata associated with the videos which are the values. Hence a score can be computed from the query and all the keys. Finally, the matched video with the highest score is returned.

We see that we can formalize this process as follows: if is the current query and and are all the keys and values in the database, we return

where .

Note that this formalism allows us to recover the way contexts were computed above (where the score function was called the alignment network). Now, we will change the score function and consider dot-product attention: . Note that for this definition to make sense, both the query and the key need to live in the same space and is the dimension of this space.

Given inputs in denoted by a matrix and a database containing samples in denoted by a matrix , we define:

Now self-attention is simply obtained with (so that ) and . In summary, self-attention layer can take as input any tensor of the form (for any ) has parameters:

and produce (with same and as for the input). is the dimension of the input and is a hyper-parameter of the self-attention layer:

with the convention that (resp. ) is the -th column of (resp. the -th column of ). Note that the notation might be a bit confusing. Recall that is always taking as input a vector and returning a (normalized) vector. In practice, most of the time, we are dealing with batches so that the function is taking as input a matrix (or tensor) and we need to normalize according to the right axis! Named tensor notation see below deals with this notational issue. I also find the interpretation given below helpful:

Mental model for self-attention: self-attention interpreted as taking expectation

where the mappings and represent query, key and value.

Multi-head attention combines several such operations in parallel, and is the concatenation of the results along the feature dimension to which is applied one more linear transformation.

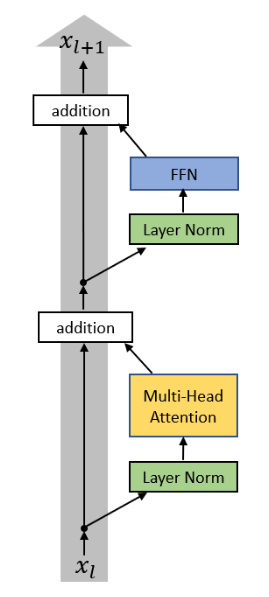

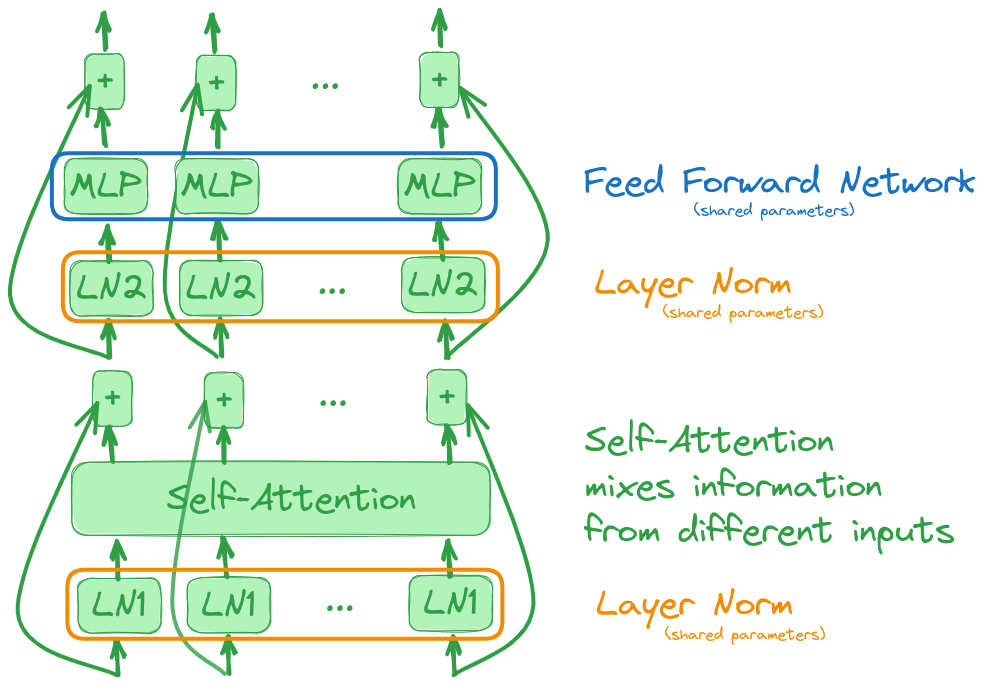

To finish the description of a transformer block, we need to define two last layers: Layer Norm and Feed Forward Network.

The Layer Norm used in the transformer block is particularly simple as it acts on vectors and standardizes it as follows: for , we define

and then the Layer Norm has two parameters and

where we used the natural broadcasting rule for subtracting the mean and dividing by std and is component-wise multiplication.

A Feed Forward Network is an MLP acting on vectors: for , we define

where , , , .

Each of these layers is applied on each of the inputs given to the transformer block as depicted below:

Note that this block is equivariant: if we permute the inputs, then the outputs will be permuted with the same permutation. As a result, the order of the input is irrelevant to the transformer block. In particular, this order cannot be used. The important notion of positional encoding allows us to take order into account. It is a deterministic unique encoding for each time step that is added to the input tokens.

Have a look at Brendan Bycroft’s beautifully crafted interactive explanation of the transformers architecture:

In Transformers using Named Tensor Notation, we derive the formal equations for the Transformer block using named tensor notation.

Now is the time to have fun building a simple transformer block and to think like transformers (open in colab).